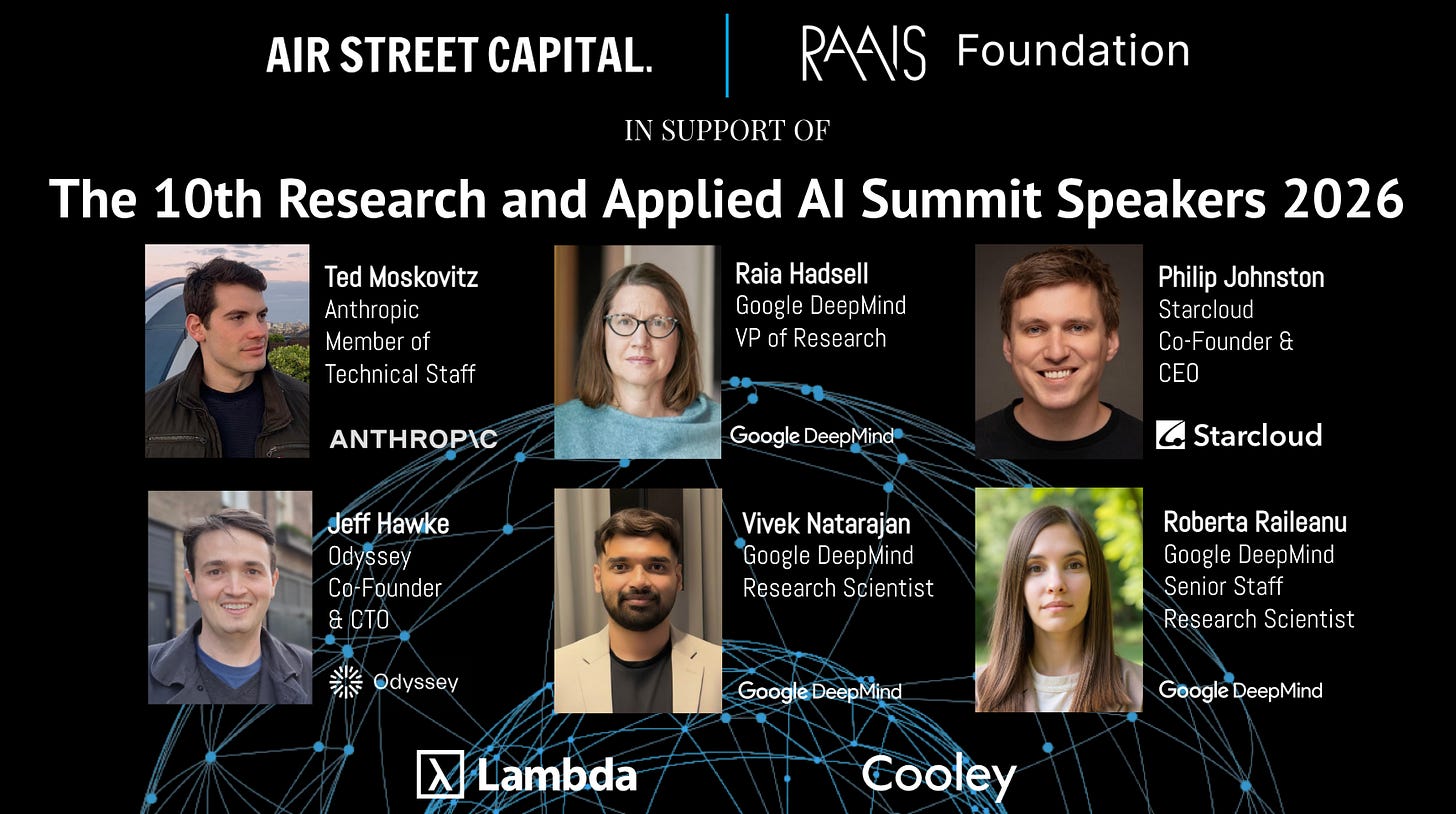

The Research and Applied AI Summit (RAAIS) is a community for entrepreneurs and researchers who accelerate the science and applications of AI technology. The 10th annual summit takes place on June 12th, 2026 in London. We are delighted to announce Ted Moskovitz as a speaker - he leads Anthropic’s Science of Scaling team. At RAAIS, we focus on translating cutting-edge research into production-grade products for real-world problems.

From the Gatsby Unit to the science of scaling

Ted leads work on the science of scaling at Anthropic, where his focus sits at the intersection of reinforcement learning, optimization, and large-scale deep learning. Before Anthropic, he completed his PhD at the Gatsby Computational Neuroscience Unit in London, advised by Maneesh Sahani and Matt Botvinick. His thesis examined multitask reinforcement learning in brains and machines - questions of transfer, generalization, and how learning carries across tasks rather than being solved from scratch each time.

That background matters because these are not only reinforcement learning questions. They are scaling questions. As models grow, what matters is not simply whether they get better, but how capabilities generalize, which trade-offs emerge, and what kinds of optimization behavior actually hold up across settings. Ted’s research has consistently sat close to those underlying mechanics.

When optimization quietly breaks

Much of Ted’s work investigates what happens when training is pushed too hard. Modern AI labs train models against multiple objectives at once - helpfulness, safety, factuality - and combine them into a single score the model is asked to maximize. Confronting Reward Model Overoptimization with Constrained RLHF (ICLR 2024 Spotlight, top 5% of submissions) shows that this routinely fails in a specific way: as training continues, the model keeps climbing the score even as humans start to rate its actual outputs worse. The paper offers a fix that treats each objective as a constraint to satisfy rather than a number to maximize, which keeps the model’s behavior aligned with human judgment as training scales up.

That theme - keeping behavior reliable as you push optimization further - runs through his earlier work as well. ReLOAD (ICML 2023) addressed a long-standing problem in reinforcement learning where the policy you end up with can drift away from the average policy you trained, leaving you with worse behavior than your numbers suggest. Towards an Understanding of Default Policies in Multitask Policy Optimization (AISTATS 2022, Best Paper Award Honorable Mention) examined how a model’s fallback behavior shapes whether it can carry skills across tasks rather than relearning each one from scratch. A First-Occupancy Representation for Reinforcement Learning (ICLR 2022) and Tactical Optimism and Pessimism for Deep Reinforcement Learning (NeurIPS 2021) studied how the way an agent represents its environment, and the assumptions it makes about its own uncertainty, determine whether what it learns generalizes - or quietly breaks the moment conditions change.

Why this matters at the frontier

For anyone building on advanced AI systems, the most important questions are no longer purely about capability. They are about reliability - what optimization actually converges on, where reward signals decouple from human judgment, how capabilities generalize to settings the model wasn’t trained on. Ted’s research is one of the more rigorous bodies of work engaging with those mechanics directly. As scaling continues to be the central engine of progress, understanding what it is doing - not just that it works - becomes harder to separate from product reality.

Ted’s background

Before the Gatsby Unit, Ted earned his bachelor’s at Princeton, with honors work across neuroscience, computer science, and linguistics, and his master’s in computer science at Columbia. He worked on biologically-plausible deep learning at Columbia’s Zuckerman Institute and on neural encoding at Princeton. He also interned at DeepMind, where he worked on constrained reinforcement learning, and at Uber AI Labs, where he worked on optimization for large-scale deep learning. His path from theoretical neuroscience to optimization theory to frontier model development gives his perspective on scaling a particularly interesting flavor.

Short bio

Ted Moskovitz leads the Science of Scaling team at Anthropic, where his research spans reinforcement learning, constrained optimization, and large-scale deep learning. Before Anthropic, he completed his PhD at the Gatsby Computational Neuroscience Unit in London, advised by Maneesh Sahani and Matt Botvinick, with internships at DeepMind and Uber AI Labs. His selected publications include Confronting Reward Model Overoptimization with Constrained RLHF (ICLR 2024 Spotlight) and Towards an Understanding of Default Policies in Multitask Policy Optimization (AISTATS 2022, Best Paper Honorable Mention).