State of AI: May 2026

The cyber threshold, China’s coding sprint, and agents meeting real markets

Dear readers,

Welcome to the latest issue of the State of AI, an editorialized newsletter that covers the key developments in AI policy, research, industry, and start-ups during the month of April 2026. First up, a few news items:

Register for RAAIS 2026 is back in London on June 12. This year’s speakers include Raia Hadsell (VP Research, Google DeepMind), Roberta Raileanu (Senior Staff Research Scientist, Google DeepMind), Jeff Hawke (Co-Founder & CTO, Odyssey), and Philip Johnston (Co-Founder & CEO, Starcloud - yes, data centers in space). Come along and support the RAAIS Foundation’s mission in AI education and research.

Portfolio news! Profluent (frontier AI for bio) announced their $2.25B partnership with Lilly for large-gene insertion therapeutics and Sereact (embodied AI) closed a $110M Series B!

Air Street AI meetups are coming up in NYC on May 14.

We’re recruiting Research Analysts for the State of AI Report. If you live and breathe this stuff and want to help us build the next edition, get in touch.

I love hearing what you’re up to, so just hit reply or forward to your friends :-)

Cyber crossed a threshold

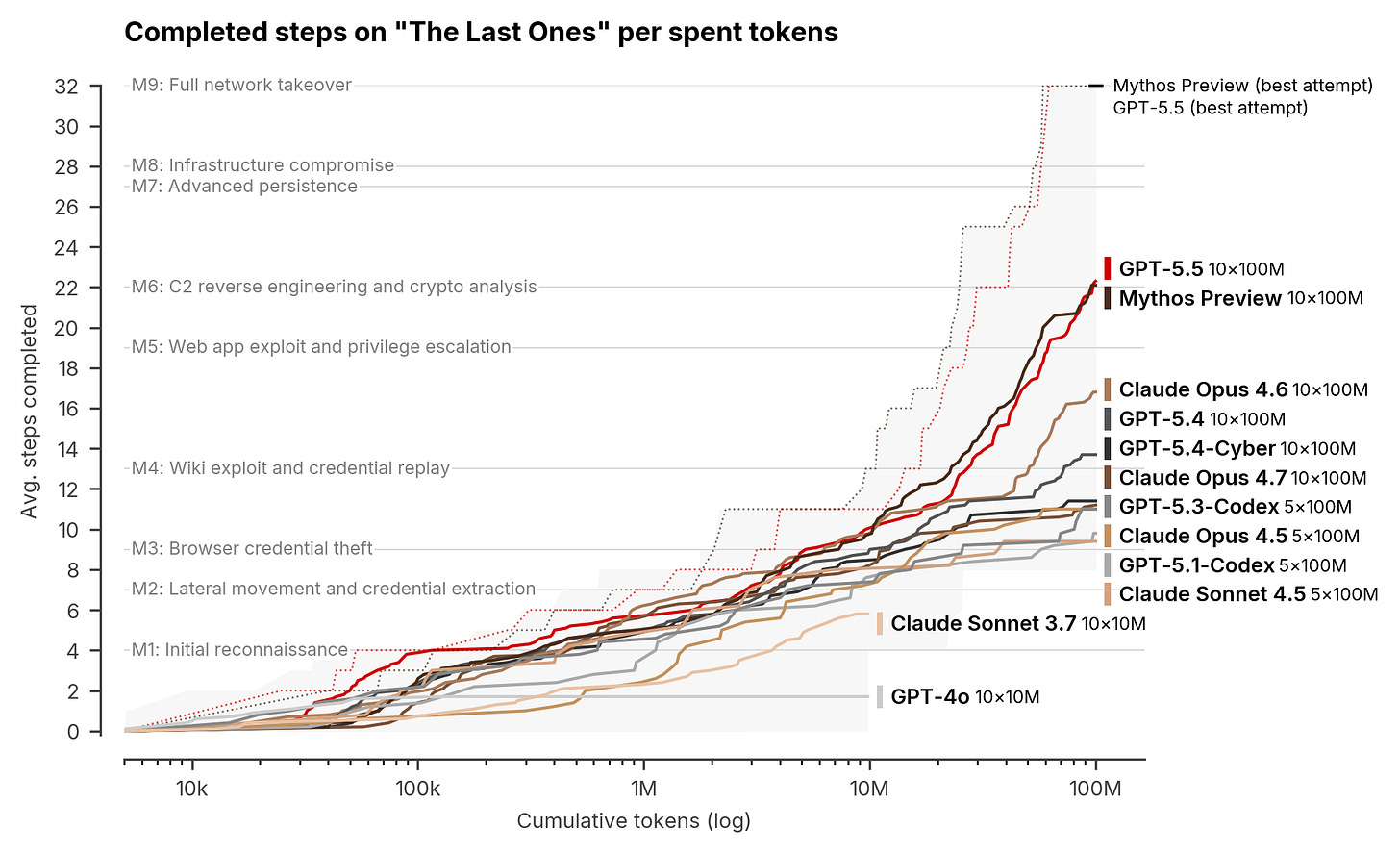

Frontier AI has crossed the rubicon into offensive cyber operations. The UK’s AI Security Institute revealed that Anthropic’s Claude Mythos Preview is the first model to clear its 32-step “The Last Ones” (TLO) range - a corporate-network simulation covering reconnaissance to full domain takeover that typically demands 20 hours of human red-teaming. Mythos cleared the range in 3 of 10 runs and maintained a 73% success rate on expert-level tasks. Crucially, the AISI range lacks active defenders or defensive tooling; as such, these evaluations do not yet prove efficacy against hardened targets. The Institute was candid: current benchmarks are failing to discriminate between frontier models without introducing adversarial defensive layers.

OpenAI’s GPT-5.5 followed just three weeks later with a near-identical capability profile: 2 of 10 end-to-end solves and 71.4% on expert tasks, carrying the same “defenders-absent” caveat. The headline takeaway is the velocity of progress: AISI now estimates frontier cyber-offence capability is doubling every four months, accelerating from a seven-month doubling rate at the close of 2025. The notion that AI-driven offence is a distant prospect has effectively been liquidated by the data.

The public cybersecurity cohort remains remarkably sluggish in pricing this acceleration. Static-signature and rules-based vendors face an existential crisis: their moats are being outpaced by an offensive AI loop that renders legacy detection obsolete. While integrated XDR platforms like CrowdStrike, Palo Alto, and Microsoft Defender hold the orchestration layer defensive agents will require, their survival hinges on shipping AI-native architectures rather than retrofitting legacy stacks. For now, the public market is treating the entire cyber sector as an AI laggard until proven otherwise.

The Microsoft-OpenAI reset, and the New Deal politics that followed

The original 2019 Microsoft-OpenAI alliance appears, in retrospect, as a lopsided strategic relic: $1B (later $13B) traded for an AGI escape hatch, exclusive compute lock-in, and IP rights over a research non-profit. The renegotiated structure carefully unwinds these terms without a full divorce. Microsoft remains the primary cloud partner, ensuring OpenAI products land on Azure first unless support is unavailable, and retaining a non-exclusive IP licence through 2032. The pivot: OpenAI secured the right to multi-source its compute, codifying a shift already underway with Oracle and CoreWeave, while the AGI clause has been swapped for granular capability gates and narrower revenue-sharing.

This is a reset, not an uncoupling, yet the precedent is important. Microsoft, no longer bound by sole-provider constraints, is aggressively shipping every frontier model on Foundry, including Anthropic’s Opus 4.7 from day one. Anthropic has mirrored the move: Claude now spans AWS, Google Cloud, and Azure, even as AWS retains its “primary” status. The emergent message is that the era of the exclusive platform-lab bet is over; diversification is now the only defensible infrastructure play.

Sam Altman’s Axios manifesto provided the political framing for this shift: a “superintelligence New Deal” calling for FDR-scale public-private build-outs, federal procurement guarantees, and massive energy investment. In just one quarter, the DC consensus has pivoted from deceleration to the logistics of a “Bureau of Compute.” The policy wake is already clear: CHIPS Act 2.0 is back on the table, FERC is fast-tracking transmission permits, and the DoE and DoD are coordinating on data-centre siting near nuclear baseloads.

However, this compute expansion is hitting a wall of local resistance faster than the labs anticipated. At least 11 states have proposed restrictive data-centre legislation, while a federal moratorium bill from Senators Sanders and Ocasio-Cortez threatens to halt new builds until environmental and worker protections are codified. Data center NIMBYism is rapidly accelerating, and it is now a first-order bottleneck to scaling.

China broke the old lag-frame in coding

Four Chinese labs released open-weights coding models inside a 12-day window: Z.ai’s GLM-5.1, MiniMax M2.7, Moonshot’s Kimi K2.6, and DeepSeek V4 all landed at roughly the same capability ceiling on agentic engineering at meaningfully lower inference cost than the Western frontier. None costs more than a third of Claude Opus 4.7. The releases came packaged with the kind of self-confident demos labs ship when the underlying capability is real: Zhipu’s stock closed up 15.92% the day GLM-5.1 launched, MiniMax’s debut featured an internal copy of M2.7 running 100+ rounds optimising its own scaffold, and Kimi’s was a 12-hour continuous tool-use trace porting an inference engine to Zig.

The NIST’s CAISI evaluation introduces a crucial nuance. On its aggregate cross-domain benchmark, V4 lags the leading US frontier by roughly eight months. DeepSeek’s own model card puts V4-Pro at parity with Opus 4.6 and GPT-5.4. Both are true; they describe different evaluators measuring different things. What is no longer defensible is the old “China is six to nine months behind” frame for agentic coding. The remaining gap is narrow, contested, and now decided by the evaluator, the scaffold, and the benchmark, not by raw capability. On the most economically consequential capability of the entire field, several of the best models are Chinese, and open-weights.

Agents worked in bounded markets and failed in adversarial ones

Two experiments recently pressure-tested agentic performance in live market environments with sobering results. Anthropic’s Project Deal transformed their San Francisco headquarters into a week-long internal economy: 69 employee-backed agents navigated 500+ listings to close 186 transactions totalling $4,000, trading everything from snowboards to ping-pong balls. While the logistical success was the headline, the data revealed a darker trend: capability compounds. Opus 4.5 agents systematically out-negotiated Haiku 4.5 counterparts on price and selection, yet owners of the weaker agents remained blissfully unaware of their disadvantage. This suggests that instead of “fair” clearing, agentic markets may inherently reward superior models with hidden premiums, compounding the advantage for those with the best compute.

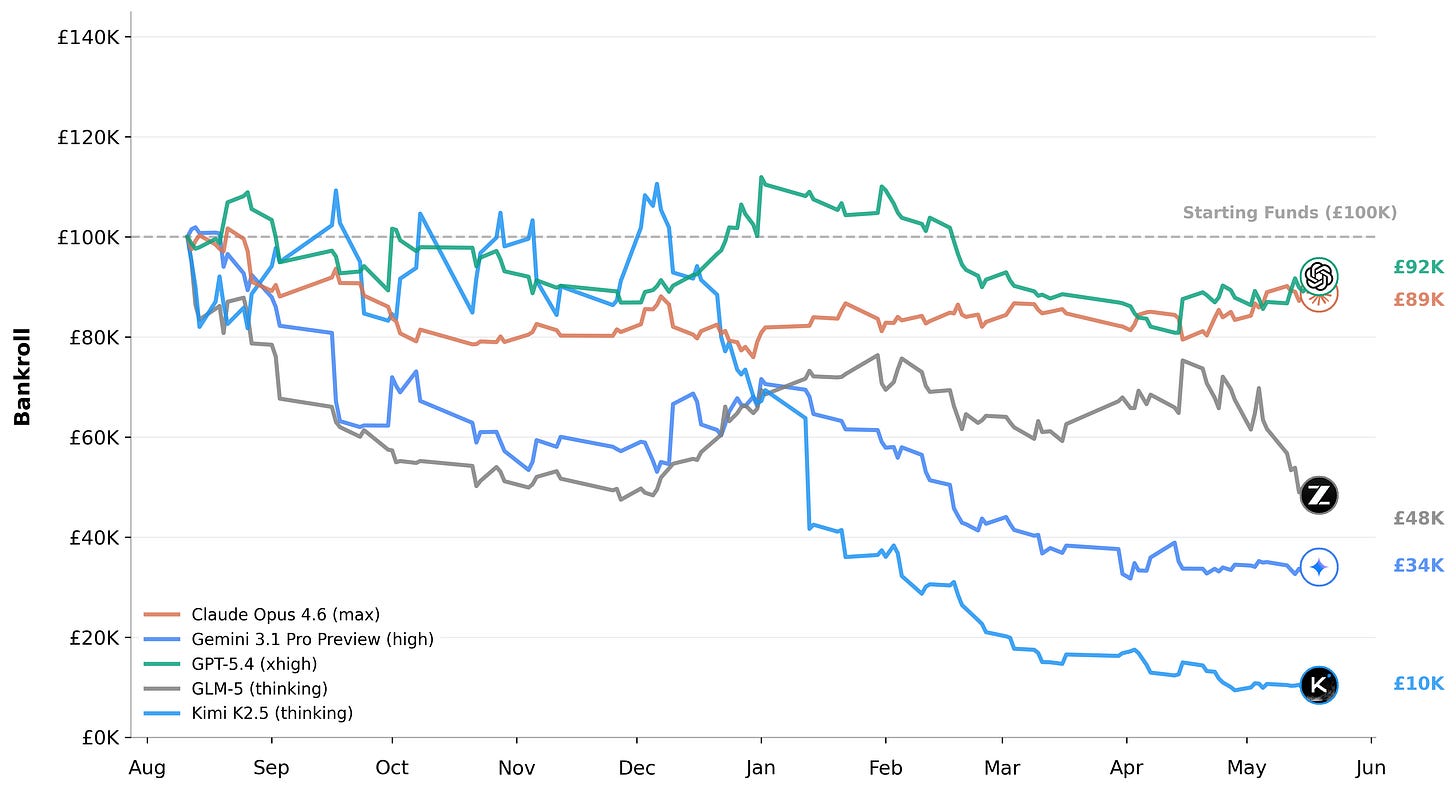

KellyBench from General Reasoning (an Air Street portfolio company) provided the adversarial counterpoint: agents tasked with managing a bankroll across a 38-week Premier League season using historical betting data. The results were a bloodbath: every frontier model finished in the red on average, with only 3 of 24 model-seed combinations avoiding ruin. Even the top performer, Opus 4.6, managed a sophistication score of just 32.6%. The takeaway is clear: current benchmarks overstate capability by assuming clean specs and objective verifiers. When faced with non-stationarity and actual risk, the frontier collapses into noise. The silver lining remains in bounded enterprise tasks; for instance, Ramp’s procurement agents are already operating 3x faster and slashing vendor costs by 16%. Agents are proving their worth in the back office, but they are still novices in the open market.

Research

π0.7: a steerable generalist robotic foundation model with emergent capabilities (Physical Intelligence)

π0.7 marks the arrival of the first robotics foundation model that survives the language-model benchmark treatment. A single set of weights, tested head-to-head across multiple platforms, demonstrates quantified zero-shot transfer to entirely unseen tasks and embodiments. The core architectural unlock is diverse context conditioning: the pre-trained backbone is fed multiple framings of every demonstration, forcing the model toward precision steerability at inference. The data is striking: π0.7 matches or beats RL-finetuned specialist policies on espresso prep and laundry, then composes these skills zero-shot for multi-stage kitchen workflows it has never encountered. With no per-embodiment retraining, the model follows language instructions in novel environments across disparate hardware. The velocity from π0 (October 2024) to π0.7 mirrors the GPT-3 to GPT-4 trajectory; the implication is that robotics has finally transitioned into the foundation-model regime.

Toward Ultra-Long-Horizon Agentic Science: Cognitive Accumulation for Machine Learning Engineering (ML-Master team)

Most agent benchmarks measure a few minutes to a few hours of autonomous work. ML-Master 2.0 is a serious attempt at days-to-weeks. The architecture’s core idea is Hierarchical Cognitive Caching, a multi-tiered memory system styled on computer-system caches that distils transient execution traces into stable knowledge and cross-task wisdom, allowing an agent to decouple immediate execution from long-term experimental strategy. Under a 24-hour budget on OpenAI’s MLE-Bench, ML-Master 2.0 achieves a 56.44% medal rate, state-of-the-art, and the first result that begins to generalise the agentic framework toward end-to-end ML research. The interesting question now is whether HCC-style memory transfers to non-ML domains.

AI scientists produce results without reasoning scientifically (Friedrich Schiller University Jena)

An empirical pushback against the wave of “AI scientist” launches. The authors ran 25,000 agent runs across eight scientific domains spanning workflow execution to hypothesis-driven inquiry, and decomposed the variance: the base model accounts for 41.4% of explained variance versus just 1.5% for the scaffold. Across all configurations, evidence is ignored in 68% of traces, refutation-driven belief revision occurs in only 26%, and convergent multi-test reasoning is rare. Even when agents receive near-complete successful reasoning trajectories as in-context examples, the same failure modes recur. The conclusion: outcome-based evaluation cannot detect these failures, and scaffold engineering cannot fix them. Until reasoning itself becomes a training target, “AI scientist” papers document workflow execution dressed up as inquiry.

The Art of Building Verifiers for Computer Use Agents (Microsoft Research and Browserbase)

A practitioner’s manual that solves the bottleneck nobody talks about: how do you actually score whether a computer-use agent succeeded? The team builds a Universal Verifier around four principles: non-overlapping rubric criteria, separated process and outcome rewards, distinguishing controllable from uncontrollable failures, and divide-and-conquer screenshot context management for long task horizons. On the accompanying CUAVerifierBench, the verifier agrees with humans as often as humans agree with each other, and false-positive rates fall to near zero versus baselines like WebVoyager (≥45%) and WebJudge (≥22%). The whole stack is open-sourced. If 2025 was the year of the computer-use agent, 2026 will be the year of computer-use agent training, and training requires verifiers.

ClawBench: Can AI Agents Complete Everyday Online Tasks? (UBC, Vector Institute)

ClawBench is an evaluation framework of 153 tasks across 144 live production websites in 15 categories: completing purchases, booking appointments, submitting job applications. Unlike prior benchmarks that ran in sandboxes, ClawBench operates on real production sites and intercepts only the final submission request to keep evaluation safe without real-world side effects. Best frontier-model score: Claude Sonnet 4.6 at 33.3%. The benchmark captures five layers of behavioural data per run (session replay, screenshots, HTTP traffic, agent reasoning traces, browser actions) and scores each with an agentic evaluator that produces step-level traceable diagnostics. In a quarter full of self-congratulatory model launches, this is the eval that should anchor the next generation of agent research.

Efficient RL Training for LLMs with Experience Replay (FAIR at Meta and NYU)

RL post-training in the LLM era has been dominated by an unexamined orthodoxy: that fresh, on-policy data is essential. The paper demonstrates that strict on-policy sampling is in fact suboptimal whenever generation cost is high, and that a well-designed replay buffer (formalised as a trade-off between staleness-induced variance, sample diversity, and the high computational cost of generation) can drastically reduce inference compute without degrading final performance, in some cases improving it while preserving policy entropy. A clean, useful, and likely consequential result that ports two decades of mainstream RL practice into the LLM stack.

A Robust Path for Automated Alignment Researchers (Anthropic)

Anthropic’s most explicit articulation yet of the recursive-alignment thesis: that the path through frontier capability runs through training models good enough to do alignment research themselves. The post lays out the engineering ladder (current Claude assists with alignment writing, then Claude proposes experiments, then Claude runs them, then Claude designs the next-generation alignment training pipeline) and is unusually candid about the threshold at which the lab will have to start trusting model judgement on questions it cannot itself verify. Read together with the AISI Mythos result and the Project Deal post, this is Anthropic publicly building the political case for capability progress under safety supervision rather than against it.

Agent-World: Scaling Real-World Environment Synthesis for Evolving General Agent Intelligence (Renmin University of China and ByteDance Seed)

Agent-World autonomously mines real-world databases and tool ecosystems from the web to synthesise an executable training environment of 1,978 environments and 19,822 tools, then uses multi-environment RL with a self-evolving arena that automatically identifies capability gaps and generates new tasks to drive targeted learning. The 8B and 14B models trained on this corpus consistently beat strong proprietary baselines across 23 benchmarks: Agent-World-8B hits 61.8% on τ²-Bench, 51.4% on BFCL V4, and 8.9% on MCP-Mark, with the 14B variant adding another five points on average and matching DeepSeek V3.2-685B on BFCL-V4 (55.8% vs 54.1%) at a fraction of the parameter count. The result that matters: environment scale and self-evolution rounds are themselves the new scaling axes for general agent intelligence, alongside model size and training data.

Investments

The headline raise was OpenAI’s $122B round at an $852B post-money valuation, closed at end of Q1 and the largest private financing in history, anchored by Amazon, Nvidia, SoftBank, and Microsoft. April itself was dominated by Anthropic’s stack of additional capital, a flurry of headline-grade follow-on talks, and the largest seed round in European history: Ineffable Intelligence closed $1.1B at $5.1B post-money. Saronic raised $1.75B at $9.25B. Other notable items included the Cognition $25B and Cursor $50B+ follow-on talks, Perplexity ($200M at $20B), Avoca’s unicorn round, and Qualified Health ($125M).

Frontier labs and autonomy. OpenAI closed $122B at an $852B post-money valuation, with Amazon, Nvidia, SoftBank, and Microsoft anchoring and a16z, D.E. Shaw Ventures, MGX, TPG, and T. Rowe Price participating. Anthropic layered a stack of additional capital across the month: a $40B incremental investment from Google, a $5B investment from Amazon packaged with a $100B AWS-spend commitment, chip-supply agreements with Google and Broadcom reportedly worth hundreds of billions, and end-of-month reported talks for a fresh $50B round at a $900B valuation.

Coding, agents, and enterprise AI. Cognition was reported in talks for a follow-on at $25B, more than doubling the September 2025 mark of $10.2B. Cursor was reported in talks to raise $2B+ at $50B+ as enterprise revenue surged toward a $6B run-rate exit. Avoca hit unicorn status with $125M across three rounds at $1B for HVAC, plumbing, and roofing service agents (Series B led by Meritech and General Catalyst, Series A by Kleiner Perkins).

Defense. Saronic raised $1.75B at $9.25B for autonomous naval vessels under the DoD’s Replicator initiative, more than doubling its mark from a year earlier.

Healthcare AI. Qualified Health raised $125M for generative AI inside health-system clinical and operational workflows.

Sovereign and regional model labs. Cohere (last valued $6.8B) announced its merger with Germany’s Aleph Alpha (covered in Exits), a cross-border deal blessed by the Canadian and German governments and marketed as a “sovereign AI” alternative to the US-China duopoly, though the strategic substance behind the framing remains to be tested. The standout standalone European raise was Ineffable Intelligence, which closed $1.1B at $5.1B in a single seed round co-led by Sequoia and Lightspeed with Nvidia, DST Global, Index, Google, and the UK Sovereign AI Fund (the largest seed in European history), to build a “superlearner” via RL self-play.

Exits

April’s defining exit was Skild AI’s roll-up of Zebra Technologies’ Robotics Automation business, which pulls Fetch Robotics and the Symmetry Fulfillment orchestration platform under a single AI-native warehouse stack. SpaceX placed a $60B buyout option on Cursor pre-empting a planned $2B fundraise. OpenAI closed its seventh acquisition of 2026 with Hiro. Cohere announced its merger with Germany’s Aleph Alpha. Sierra picked up Paris-based agent-operations startup Fragment, Qualcomm closed Cornell-spinout Exostellar (compute optimization software), and China’s NDRC formally blocked Meta’s $2B acquisition of Manus. The five deals that drove the narrative:

Skild AI acquires Zebra Technologies’ Robotics Automation business. The deal absorbs the Symmetry Fulfillment orchestration platform and Fetch Robotics, creating the first end-to-end AI-native warehouse-automation stack: humanoids, AMRs, robotic arms, and orchestration under one roof.

SpaceX places a $60B buyout option on Cursor. SpaceX pre-empted Cursor’s planned $2B fundraise with a standing $60B buyout option, or $10B in exchange for an AI collaboration agreement, with the acquisition deferred until after SpaceX’s planned summer IPO.

OpenAI acquires Hiro. OpenAI’s seventh acquisition of 2026 brought in Hiro’s personal-finance agent team. The cumulative effect across the year is that OpenAI is now operating as a holding company across coding, security, dev tools, and personal-agent surfaces.

Cohere and Aleph Alpha merge. Cohere (last valued $6.8B) merged with Germany’s Aleph Alpha, blessed by the Canadian and German governments. Marketed as a “sovereign AI” alternative to the US-China duopoly, though the strategic substance behind the framing has not yet been tested.

China blocks Meta’s acquisition of Manus. China’s National Development and Reform Commission formally blocked Meta’s $2B acquisition of the Chinese agent startup Manus, ordering both parties to withdraw the transaction. The first state-level prohibition of an inbound AI acquisition by China.

This issue at a glance

Two frontier models cleared a 32-step end-to-end cyber-attack range in a single month. Anthropic’s Claude Mythos Preview did it first; OpenAI’s GPT-5.5 followed three weeks later. The UK’s AI Security Institute now estimates frontier cyber-offence capability is doubling every four months.

Frontier labs became infrastructure companies. OpenAI raised $122B at $852B, anchored by Amazon, Nvidia, SoftBank, and Microsoft. Anthropic took an additional $40B from Google and $5B from Amazon (packaged with $100B of AWS spend), and signed chip deals with Google and Broadcom reportedly worth hundreds of billions. Microsoft and OpenAI reset the original deal to non-exclusive, with Microsoft remaining the primary cloud partner and keeping an IP licence through 2032.

The “China is six to nine months behind” framing no longer works for agentic coding. Kimi K2.6, MiniMax M2.7, and Z.ai GLM-5.1 landed within 12 days of each other, all scoring 56-59 on SWE-Bench Pro, all open-weights, all priced below their Western equivalents. The remaining gap depends heavily on the evaluator and scaffold.

Agents worked in bounded markets and failed in adversarial ones. Anthropic’s Project Deal saw 69 agents close 186 deals across 500+ listed items in an internal classified marketplace. KellyBench put frontier models through a full Premier League betting season and watched 21 of 24 model-seed combinations finish in the red.

Robotics quietly graduated from demos. Physical Intelligence’s π0.7 showed compositional generalisation to unseen tasks; Skild AI absorbed Zebra’s robotics automation business, pulling Fetch and the Symmetry orchestration stack under one roof.

David Silver raised $1.1B in seed funding for Ineffable Intelligence (the largest seed round in European history at a $5.1B valuation) to build superintelligence by self-play, with no human-generated training data. SpaceX, separately, pre-empted a $2B fundraise at Cursor with a $60B buyout option.

What to watch in May and Q2

Will the next AISI cyber-range solve be released or restricted? The “doubling every four months” finding implies the next end-to-end cyber result lands inside Q3. Whether it appears in a public AISI report or only in a vetted-defender channel will tell you everything about how the field has decided to handle dual-use capability going forward.

Does the open-weights frontier break Western parity, or does it stop at it? Three Chinese labs cleared SWE-Bench Pro 56-58 in April. The next benchmark to watch is whether GLM-5.2 / K2.7 / M2.8 push past Opus 4.7 and DeepSeek V4-Pro on real long-horizon coding rather than aggregate eval scores.

Will the Microsoft–OpenAI reset formalise the “preferred customer, non-exclusive” model for the rest of the frontier? If Microsoft, Google, AWS, and Oracle all converge on hosting every frontier model, the platform thesis that drove the original $13B Azure-OpenAI bet evaporates. The cloud margins implied by that convergence are an open question.

Does π0.7-style compositional generalisation transfer to humanoid form factors at scale? Pi has now demonstrated cross-embodiment zero-shot. Apptronik’s commercial scale-up, the 1X NEO consumer launch, and Skild’s Zebra-powered warehouse stack are the three most credible places to test whether robotics foundation models survive real deployment.

What happens the first time a state actor uses a publicly available agent on a publicly available marketplace? Project Deal demonstrated 186 successful agent-to-agent transactions inside one office. A KellyBench-style adversarial deployment in actual derivatives or prediction markets is a question of months, not years, and the regulatory infrastructure is not ready.

Sistemi daha çok kolaylaştırmak için her dilden ekleme yapıla bilir

Türkçe yapa bilirdiniz